Audio Editor 1.0

A useful application!

I posted a lot of experimental stuff on this weblog over the past year. Or components that might have their use when built into proper applications. But this is my first web app that’s useful on it’s own.

This is a simple Flash audio editor that works in the browser. Everything it does is done by ActionScript code inside the application. There’s no backend that does the difficult work. So it would function just as well in the standalone Flash Player or exported as an Air application as it does in the browser.

This audio editor has only a very basic set of features. Here’s the things it can do:

- Open mono and stereo WAV and MP3 files.

- Select part of the audio file by graphically adjusting the start and end point.

- Change the pitch by adjusting the playback speed.

- Reverse the file so it plays backwards.

- The result of these adjustments (start and end, pitch, reverse) can be saved as a new stereo WAV file.

The Flash file

Download the source files here.

Code

This project is built on the PureMVC framework again. It’s what I’ve been using for all my projects the last half year and I’m still really happy with it. The code gets more complicated with each project but I still have a clear overview of where everything is and I can even find my way quickly around older projects.

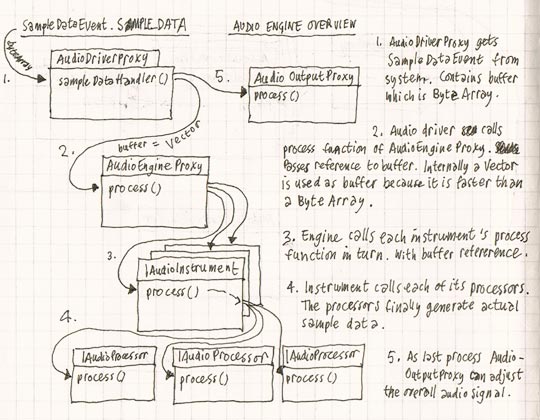

Here’s a short overview of the main building blocks of the audio engine:

Audio engine overview

- AudioDriverProxy listens to the SampleDataEvent. On each event it sends a request to the audio engine (AudioEngineProxy) to fill the buffer with samples.

- AudioEngineProxy holds all the instruments (of type IAudioInstrument) that produce sound. When it receives the request for samples from the audio driver it passes this request on to all the instruments that are registered with the engine.

- IAudioInstrument is (the interface for) a general instrument. An instrument doesn’t generate sound itself, but it maintains a list of IAudioProcessor objects that produce the actual audio. Each processor is a note that the instrument currently plays. So if an instrument was to play a three note chord three processors would be added to the list. And when a note is finished the processor is removed from the list. Besides that IAudioInstrument has an API for the instrument to be played. These are MIDI inspired functions like noteOn(), noteOff() and programChange().

- IAudioProcessor is the object that produces sound. When an instrument receives a buffer from the audio engine it passes the buffer on to each of its processors. The processor loops through all the samples in the buffer and adds its own values to it. An IAudioProcessor can generate sound like a synthesizer or play back existing sound like a sampler. It doesn’t matter as long as it implements the IAudioProcessor interface.

- AudioOutputProxy is the last stage in the audio generation process. Once all instruments have contributed their sounds to the buffer, the audio output is the last one that gets the buffer with sample data from the audio engine. Here final adjustments to the sound can be made. In the case of the audio editor the only adjustment that can be made is to set the overall output volume.

The audio editor is a really simple implementation of this audio engine: There is only one instrument – the editor itself (WaveEditorProxy) – and it can play only one sound at a time: the audio being edited (PitchedSampleProcessor as IAudioProcessor).

The Harrington 1200, a state of the art songwriting computer.

Hi Nick, thanks.

The assets.fla was probably made in Flash CS4 so must be the problem.

Yes sure, feel free to use it, change it, add to it or do whatever you want with it.

Is it ok to upload and add your audio editor to my website or blog?

Great stuff..

Thanx

This is extremely impressive. I am looking to adapt from this project; however, I am unable to open your source files on my MacBook Pro (I am getting a ‘Failed to open document’ error). I am using Adobe Flash CS3 Professional. Do you know of any way around this?

Again, really nice work. I hope I can use this as a foundation in the future.